|

1/7/2024 0 Comments Multiple url extractor

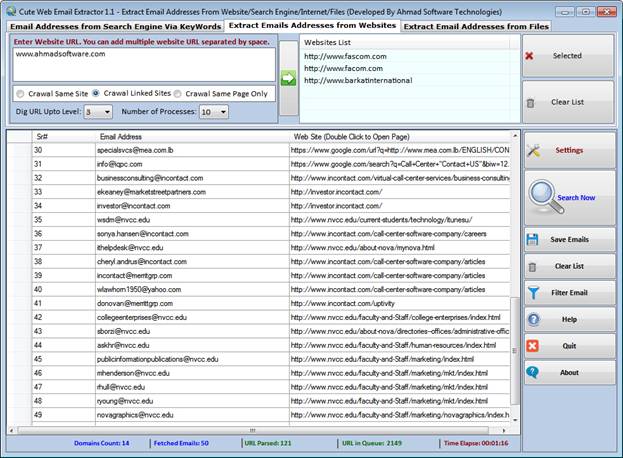

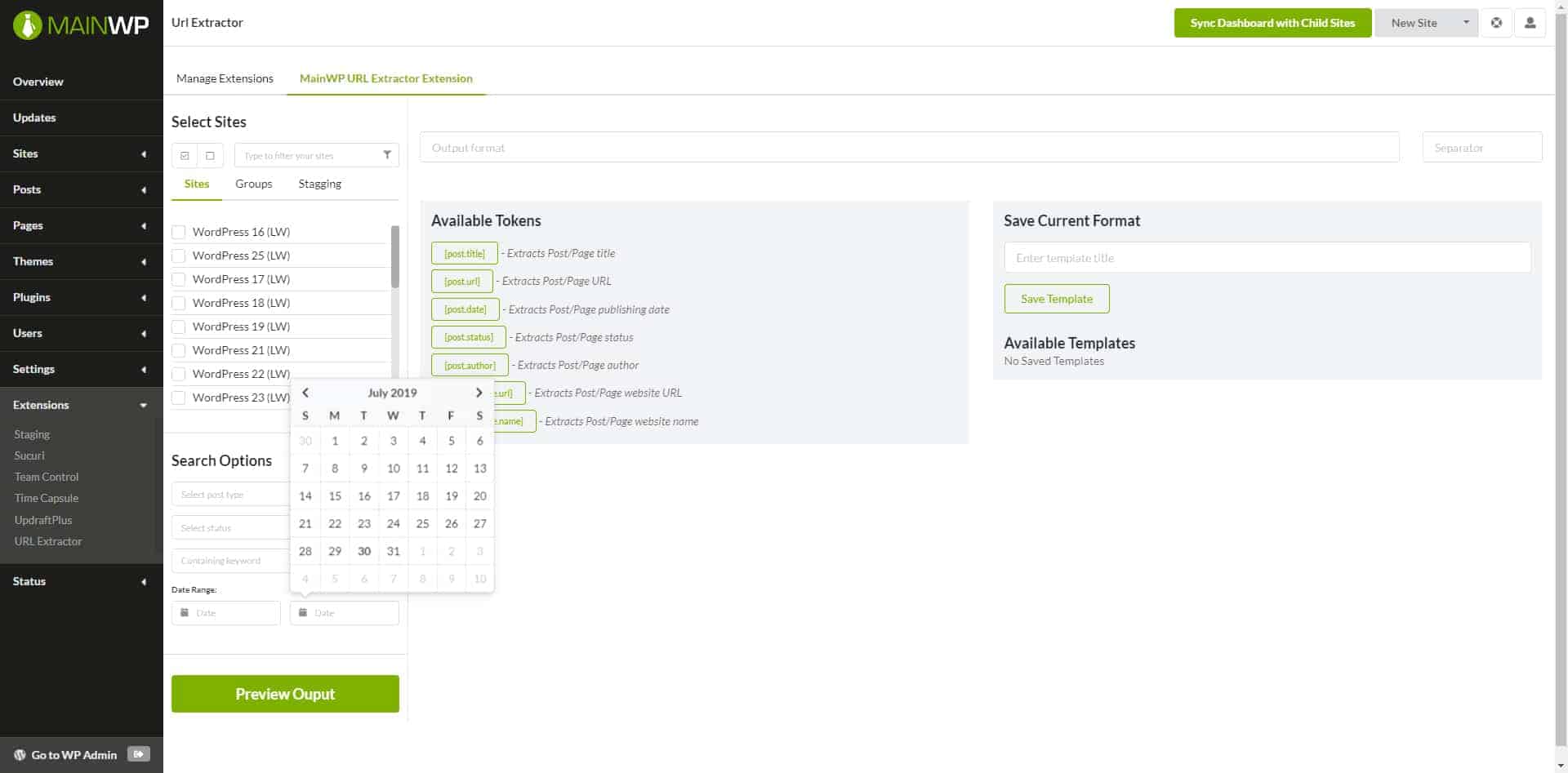

The first three records returned from our above code. We use the replace method to get rid of it and replace it with empty space. segment (Each site is different after all). The formatting on the returned URLs is rather weird, as it is preceded by a. The next line adds the base url into the returned URL to complete it. Basically this XPath expression will only locate URLs within headings of size h3. Hence we created an XPath expression, '//h3/a' to avoid any non-books URLs. If you want to remove duplicate URLs, please use our Remove Duplicate Lines tool. This tool extracts all URLs from your text. It works with all standard links, including with non-English characters if the link includes a trailing / followed by text. Do-follow and No-Follow Status of each anchor text. This tool will extract all URLs from text. Using this tool you will get the following results Total number of the links on the web page Anchor text of each link. First, it gets the source of the webpage that you enter and then extracts URLs from the text. Url = self.base_url + url.replace('./.', '')Īll the URLs of the books were located within heading tags. How does this URL extractor work Working with this tool is very simple. The results also contain information about the URL of the web page, domain, and referring URL (if the page was linked from another page), and depth (how many links away from Start URLs the page was found).Rules = [Rule( LinkExtractor(allow = 'books_1/'), Note that this approach can generate false positives. Uncertain phone numbers - These are extracted from the plain text of the web page using a number of regular expressions.Emails Phone numbers - These are extracted from phone links in HTML (e.g.

For each page crawled, the following contact information is extracted (examples shown): You can then download the results in formats such as JSON, HTML, CSV, XML, or Excel. The actor stores its results into the default dataset associated with the actor run. For example, if the setting is enabled and the actor finds a link on to, it will not crawl the second page, because is not the same as The actor also accepts additional input options that let you specify proxy servers, limit the number of pages, etc. Stay within domain - If enabled, the actor will only follow links that are on the same domain as the referring page.If zero, the actor ignores the links and only crawls the Start URLs. Maximum link depth - Specifies how deep the actor will scrape links from the web pages specified in the Start URLs.You can enter multiple URLs, upload a text file with URLs, or even use a Google Sheets document. Start URLs - Lets you add a list of URLs of web pages where the scraper should start.The actor offers several input options to let you specify which pages will be crawled: Read our step-by-step guide to using Contact Details Scraper. Instead of manually visiting web pages and copy-pasting names and numbers, you can extract the data and rapidly sort it in spreadsheets or feed it directly into your existing workflow.Ĭheck out our industry pages for use cases and more ideas on how you can take advantage of web scraping. Harvesting contact details can help you populate and maintain an up-to-date database of contacts, leads, and prospective customers. Scraping contact details can give you a fast way to get lead generation data for your marketing and sales teams. Phone numbers (from phone links or extracted from text).Our free Contact Details Scraper can crawl any website and extract the following contact information for individuals listed on the website:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed